Hello,

A startup called Starcloud recently floated an idea that sounds like it belongs in a science fiction novel.

They aim to launch data centers into orbit, power them entirely with solar energy, and use them to mine Bitcoin in space. At first glance, this pitch may seem like the kind of pitch you’d expect from someone who has spent too much time staring at Starship launch videos.

Why would anyone send thousands of servers into orbit when we already have entire continents to build them on?

But the more you look at it, the less ridiculous it becomes. Because the digital economy is quietly running into a constraint that engineers cannot code their way around - Energy.

In 2024, data centers consumed roughly 415 terawatt hours of electricity, about 1.5% of all global power usage. By the end of the decade, this figure is expected to climb to 945 terawatt hours, roughly equivalent to Japan’s entire electricity consumption.

And that is before any of the next generation of AI infrastructure we keep talking about even arrives.

A large data center today can consume as much electricity as 100,000 households. Some facilities currently under construction are expected to require 20 times that amount. Across the industry, more than 90% of data-center operators now cite power availability as their top constraint, ahead of chips, networking hardware, or real estate.

In other words, the bottleneck has shifted. For decades, the digital economy advanced by building faster chips, better software, and more powerful algorithms. Now, the constraint is much more physical.

In this piece, I’ll explore why the global race for compute is increasingly becoming a race for cheap energy, how Bitcoin miners and AI infrastructure are competing for the same power sources, and where the search for electricity could push the next generation of digital infrastructure.

The Hidden Bottleneck: Energy is Becoming the New Compute

For most of the history of computing, the focus was primarily on silicon.

The industry is obsessed with transistor density, clock speeds, and chip architecture. Moore’s Law became the metronome of technological progress. Engineers designed faster chips, companies packed more transistors into smaller spaces, and software developers used that additional power to build increasingly complex applications.

From personal computers to smartphones to cloud computing, every wave of digital infrastructure was ultimately enabled by improvements in semiconductor performance. When systems required more computing power, the solution was always either to build better processors or deploy larger clusters of machines. Compute was scarce, but the semiconductor industry steadily solved that scarcity.

Modern AI systems operate very differently.

Instead of relying on a single powerful machine, they run on massive clusters of specialized processors, constantly exchanging data while performing trillions of calculations. Training large language models (LLMs) requires thousands of GPUs working together for weeks at a time, and even running these systems in production demands enormous computing infrastructure.

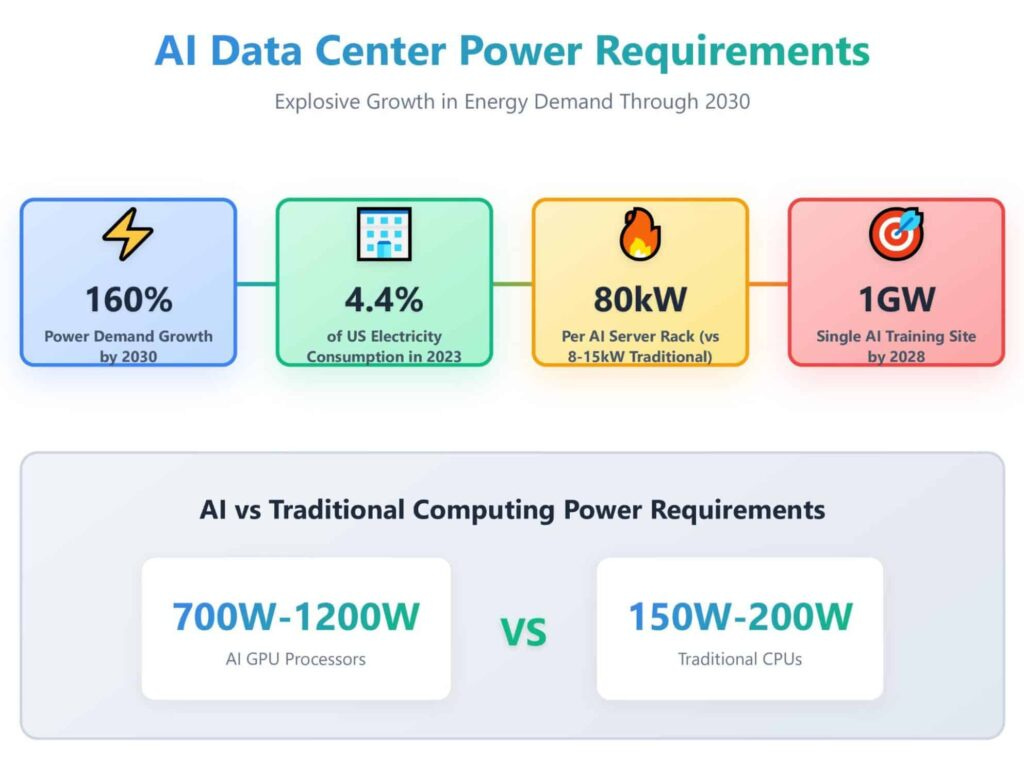

The scale of these workloads is fundamentally different from traditional computing. A typical server rack in a traditional data center consumes 5 to 10 kilowatts of electricity. But AI racks equipped with modern accelerators can draw anywhere between 40 and 100 kilowatts, depending on the configuration.

Multiply that difference across thousands of machines, and the facility itself begins to resemble industrial infrastructure. Large AI campuses now contain tens of thousands of these processors running continuously, pushing total power consumption into ranges that look more like heavy industry. At that scale, a data center is no longer just a building filled with computers. It becomes an energy-intensive industrial complex whose primary input is electricity and whose output is compute.

For a long time, this distinction did not matter much because electricity was assumed to be readily available. Data centers were typically built near population centers, where fiber connectivity, engineering talent, and reliable infrastructure already existed. Energy was treated as a background utility, something the grid would simply provide whenever needed.

But the newest generation of AI infrastructure is arriving faster than the electrical systems designed to support it. Designing and constructing a large computing facility can take only a few years. Expanding transmission lines, substations, and generation capacity usually requires much longer timelines due to permitting, environmental approvals, and construction cycles.

Companies building large AI clusters are discovering that securing the hardware is no longer the hardest part. Powering those machines is.

Electrical grids were designed for gradual growth across cities and industries, not for facilities that suddenly demand hundreds of megawatts in a single location. Data center proposals now resemble industrial power loads, the kind utilities typically plan for over decades rather than years. Meeting that demand requires new substations, upgraded transmission infrastructure, and additional generation capacity. These upgrades move slowly. A computing campus can be built in a few years, while expanding the grid that feeds it can take far longer.

As electricity becomes the constraint, the logic of where computing infrastructure is built begins to mirror the patterns of earlier industrial eras. Energy-intensive industries have always followed power. Aluminium smelters clustered around hydroelectric dams. Steel plants emerged where energy and raw materials were abundant.

Now, compute is beginning to follow the same logic. Regions capable of delivering large and reliable electricity supplies suddenly become attractive for entirely new reasons. Surplus hydroelectric power, abundant renewable generation, or underutilized energy infrastructure can transform remote areas into viable locations for large computing facilities.

Bitcoin mining reached this conclusion years ago. Miners built their entire industry around finding the cheapest electricity. Their operations spread across hydro dams, wind farms, and remote energy sites because the output of mining does not depend on where the machines are physically located.

In doing so, miners unintentionally mapped the world’s pockets of cheap electricity.

The Global Hunt for Cheap Energy

Bitcoin miners did not just find cheap electricity. They also uncovered something even more interesting: large parts of the global energy system are surprisingly inefficient.

Electricity is difficult to store and expensive to move over long distances. Power grids must constantly balance supply and demand in real time. When too much electricity is produced, and the grid cannot absorb it, that energy simply goes to waste.

This happens more often than most people realise. Solar farms frequently generate more electricity at midday than the grid can carry to cities. Wind farms can produce large bursts of power during the night when demand is low. In both cases, grid operators often have no choice but to shut down turbines or disconnect solar panels, even though the energy is available.

In California alone, over 3 terawatt hours of renewable electricity were curtailed in 2023 because the grid could not absorb it.

For miners, this waste looked like an opportunity. Instead of building facilities where electricity is already expensive, they began placing machines directly next to energy sources that struggled to find buyers. Wind farms in West Texas, hydroelectric dams in remote valleys, and geothermal plants in sparsely populated regions became attractive locations.

If electricity could not easily travel to cities, the computers would travel to the electricity.

This approach created some strange economics. During periods of oversupply, electricity prices can fall to zero. Occasionally, they go negative, meaning generators pay someone to consume their power rather than shut down production.

Mining operations thrive in these conditions. In Texas, several mining farms now operate directly alongside renewable energy projects that regularly produce excess electricity. Instead of curtailing production, power developers sell the surplus energy to miners who can run their machines whenever electricity becomes cheap.

Miners also discovered another advantage: they are extremely flexible consumers.

Unlike factories or manufacturing plants, mining facilities can shut down almost instantly without disrupting any physical production process. When electricity demands spike, operators simply switch off their machines.

In Texas’s ERCOT grid, mining companies now participate in demand response programs that reward large consumers for reducing electricity usage during peak demand. At times, miners have earned more money from these grid payments than from the Bitcoin they would have mined.

One large mining operator reportedly earned around $31 million in grid credits in 2023 by temporarily shutting down operations during high-demand periods.

In effect, mining turned wasted electricity into a digital commodity.

This has led to a quiet reshaping of the global mining map. When China banned Bitcoin mining in 2021, large portions of the industry relocated within months. The United States rapidly became the largest mining hub, while countries with cheap coal or hydroelectric power temporarily absorbed large amounts of capacity.

Few industries move this quickly. Mining machines can be packed into shipping containers, transported across borders, and redeployed whenever electricity becomes cheap enough to justify the move.

By chasing these pockets of energy, miners unintentionally revealed that if electricity becomes the limiting factor, computing will gradually move towards wherever large amounts of energy already exist.

Some of those places may lie far from the world’s major population centers.

When the Search for Energy Leaves Earth

So, where is the most amount of energy available?

On Earth, the answer is complicated. Electricity must be generated, transmitted across long distances, and distributed through power grids that were never designed for massive clusters of machines. Transmission lines get congested. Renewable energy gets curtailed. Entire regions produce electricity that cannot easily reach the places where computing infrastructure currently exists.

Looking beyond the surface of the planet changes the equation entirely. Solar energy is dramatically more abundant in orbit than on Earth’s surface. Outside the atmosphere, sunlight is stronger and uninterrupted by weather, clouds, or night cycles. Solar panels placed in orbit can receive constant sunlight for long periods, producing electricity far more consistently than panels on the ground.

For compute infrastructure that consumes enormous amounts of power, consistency matters as much as total output.

One of the highest operational costs of modern data centers is electricity. Power is required not only to run processors but also to cool them. Entire engineering disciplines now exist to manage airflow systems, liquid cooling networks, and heat dissipation inside facilities packed with thousands of machines.

The vacuum of space changes the equation as well. It allows heat to dissipate through radiation, while solar energy can be harvested continuously without the variability that renewable generation faces on Earth. In theory, a computing facility operating in orbit could run on a steady stream of solar power without competing with cities, factories, or households for electricity.

Of course, operating in space introduces a different set of challenges.

Launching hardware into space is expensive. Satellites must survive radiation, extreme temperature swings, and the constant risk of orbital debris. Replacing faulty equipment hundreds of kilometers above Earth is far more complicated than sending a technician into a warehouse-sized data center.

For now, these constraints make orbital infrastructure feel more like an experiment than an immediate solution. But the fact that companies are considering ideas like this says something important about where computing is headed.

For most of the digital era, the primary bottleneck was computational power. Engineers responded by building better processors and larger data centers. That model worked because electricity was assumed to be readily available.

But now, with AI, hyperscale cloud infrastructure, and energy-intensive cryptographic networks pushing demand into ranges that resemble industrial power consumption, the limiting factor is no longer just processors or software.

It is energy. And throughout history, industries that depend on energy have always migrated towards wherever that energy is easiest to access. Compute may be approaching a similar turning point. In some cases, that means building next to remote power projects. In others, it means designing infrastructure for environments that were never previously considered part of the digital economy.

Looking beyond Earth simply extends that same logic. Orbital data centers are not really about placing computers in space for novelty. They represent a different way of thinking about it, where the most abundant energy source available is not limited by land, grids, or transmission lines.

The answer may still take decades to materialize, but the direction of the conversation itself is revealing. For decades, the expansion of computing was defined by breakthroughs in semiconductors. The next phase may be just as much about breakthroughs in energy infrastructure.

That’s it for today! See you next week!

Until then, stay curious!

Token Dispatch is a daily crypto newsletter handpicked and crafted with love by human bots. If you want to reach out to 200,000+ subscriber community of the Token Dispatch, you can explore the partnership opportunities with us 🙌

📩 Fill out this form to submit your details and book a meeting with us directly.

Disclaimer: This newsletter contains analysis and opinions of the author. Content is for informational purposes only, not financial advice. Trading crypto involves substantial risk - your capital is at risk. Do your own research.