I write, I write a lot, until something true shows up that I didn’t put there. But I don’t think that’s special to me. That’s just how humans work. We don’t know what we feel until we feel it out loud. Neither do we know what we believe until we hear ourselves say it.

Tough to root for the writer, the creator, the art in you when the world is looking for the thing that ships on time.

One thing I have been sitting with lately is that the most valuable thing is going to be being a human. As human as you possibly can. And tell me how hard can that be? We are making sense of being alive in a direction we didn’t plan, toward a conclusion we couldn’t have predicted.

Ben Roy said something here that I’ve been trying to say for a while. He got there first and got there better. His brain sees the world through his own particular life. His arguments, his places, his half-finished books. Mine does the same. Yours does too. No model has ever lived a day, lost an argument, or changed its mind about something it once believed completely. Yet.

It doesn’t have a nightstand. We do. That’s what you’re about to read.

—Thejaswini

Everyone I know that works in tech is having some flavor of existential crisis these days. People are worried that advanced LLMs are going to replace all knowledge work, possibly even in the near future.

I think part of this anxiety is reasonable. The AI labs have released impressive updates over the last six months, and the industry is alive with what feels like exponential change, but despite all the hoopla, what’s inconsistent to me is that I still find AI systems incredibly underwhelming when it comes to writing.

What I want to do with this essay is reason through why I think that’s the case and by extension wrestle with where I think human writers fit in a world of (increasingly) elite artificial intelligence.

To start, I want to be specific about what I mean by writing because it’s important: writing is thinking on paper. It’s an extension of someone’s mind made manifest in the world through words with the purpose of passing along a container of meaning from one person to another.

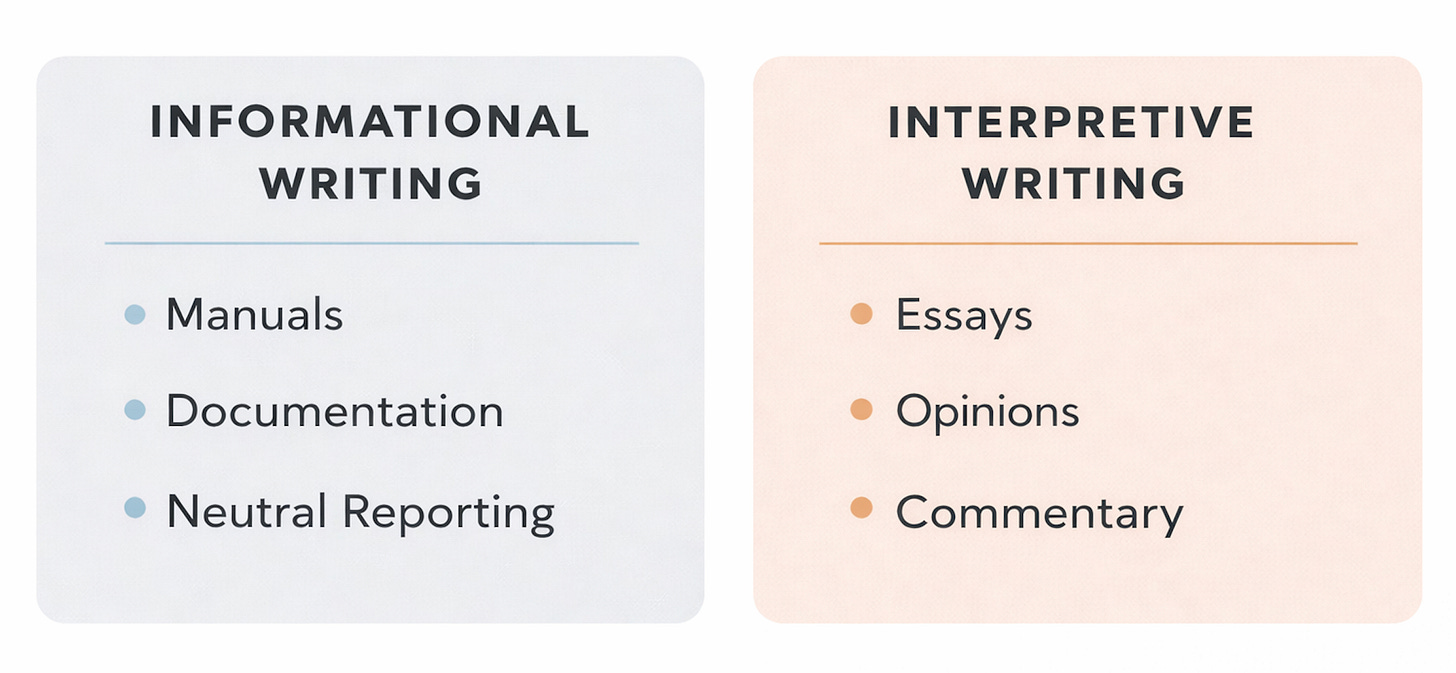

I realize that’s a broad starting point, so let’s go one level deeper and split writing into two sub-categories: informational writing and interpretive writing. Informational writing is about the transmission of facts. It’s the world of documentation, instruction manuals, and neutral reporting. Interpretive writing is about sharing a perspective. It’s the world of essays, opinions, and commentary.

I acknowledge that life is more complicated than a simple binary, and, yes, every piece of writing has some subjectivity to it with how information is presented, but for the sake of argument, let’s say that all writing has a dominant intent, which is one of those two categories.

LLMs are great at informational writing. They can create a memo from a voice call, summarize an article, draft a simple contract, and so on. That’s useful for work purposes, but it’s not what I’m talking about here. When I say LLMs are weak at writing, what I mean is they’re weak at interpretive writing. They do poorly when trying to write with a consistent subjective lens. They struggle to have an opinion.

One final clarifier before moving forward: let’s use essays as the sole example of interpretive writing so we can agree on a concrete form to keep in mind as we discuss these ideas; and while we’re here, I’ll give some background on what an essay actually is so we’re ultra clear.

The word essay comes from the French, “essai,” which means to try or to attempt. The connotation there is of wrestling with words to work through a problem. So, we can say that an essay is struggle in written form, and so far, the machines can’t write in that manner. There are two reasons why I think this is the case. Let’s consider both.

The first problem with LLMs writing essays is the entire process of prompting one of these systems to get an output misunderstands what the act of writing is.

People fundamentally can’t prompt good essays into existence because writing is not a top-down exercise of applying knowledge you have upfront and asking an LLM to create something. AI agents also can’t create good essays for the same reason. Even though their step-by-step reasoning is more complex and iterative than human prompting, a chain of thought is still trying to accomplish a predefined goal.

By contrast, real writing is bottom up. You don’t know what you want to say in advance. It’s a process of discovery where you start with a set of half-baked ideas and work with them in non-linear ways to find out what you really think.

There’s a Flannery O’Connor quote that speaks to this where she says, “I write because I don’t know what I think until I read what I say.” I’m the same way. I don’t think people can skip doing the work of wrestling with ideas because that’s the core chaotic energy that underpins good writing. It’s how you figure out what you believe and how you want to communicate it.

There’s also a more philosophical extension to this point which is that human brains function differently than LLMs. They’re squishier. There’s a famous book, Thinking Fast and Slow by Daniel Kahneman that has a helpful framework to explain this difference. He talks about two types of thinking. There’s System 1 thinking, which is fast, automatic, and driven by pattern recognition. Then there’s System 2 thinking, which is slow, methodical, and reflective.

Humans think in both modes. LLMs also “think” in both modes.

For System 1 thinking, a human makes snap judgments all the time about people they see, posts they read on the internet, or whatever else. Similarly, an LLM can take in a simple query and give us a one-shot output in response based on the model’s training.

For System 2 thinking, a human takes in ideas, considers them, and through conscious effort can produce new ideas. Similarly, reasoning models can think for extended periods of time, consider a problem, and produce novel outputs.

The difference – in both types of thinking – is that LLM “thought” or inference is mathematically deterministic where human thought is delightfully messy.

Once a model finishes its training, its weights are saved and its thinking function becomes static. This is represented as a set of numbers, which from that point on don’t change. When a model thinks, it takes an input and iteratively chucks that input into a vortex of math (its weights and operations) and that then produces an output. It’s a predictive exercise based on statistical associations, and it only feels alive because when words are generated there’s a pseudo-random sampling involved in that output process.

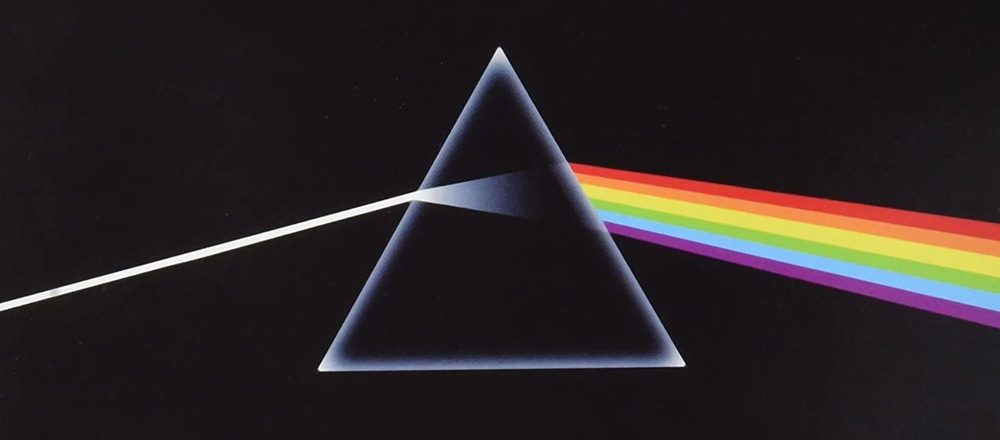

Now, when a person thinks, our manner of thinking is more like taking ideas and filtering them through a set of “weights” that are constantly in flux, namely our accumulated life experiences and how we’re constantly interpreting and reinterpreting them. The process is alive.

What happens is we take an input thought and refract it through this prism of our humanity, our memories, the places we’ve lived, the arguments we’ve had, the people we know, and infinite other bits and bops of life experience to see multiple, blended, possible outputs on the other side simultaneously. It’s like the Pink Floyd album artwork below where normal light is the input, the rainbow is the set of outputs, and the prism is someone’s brain.

So, both LLMs and human brains can think and compose writing. That’s cool. The issue is the way these machines produce words leads to sterile outputs. Like, it’s always obvious to me when someone shares writing they’ve prompted because the outputs tell on themselves. The reason for this is because LLMs lack the unique perspective of a human mind at work (or play).

There’s a visual analogy I like to use when comparing these two kinds of writing which is that a good essay is like a cathedral of ideas. It’s a work of craft, beauty, and the quirks of time and place. But an LLM attempt at an essay is like a glass skyscraper. It’s functional. It’s a building, sure. But if you looked at a picture of it, you’d have a difficult time telling whether it was located in Portland, Atlanta, or Denver because it has no soul.

The best counterpoint here is that I simply have a skill issue on my hands. Maybe I’m not clever enough, and if only I knew how to more skillfully orchestrate LLMs or swarms of editorial AI agents, then I’d be able to create works of magical, overflowing craftsmanship with them.

Maybe.

I do think people with specialized knowledge of writing can create better outputs with an LLM. Agents can get to slightly better outputs as well through iterative loops. But there’s an upper bound to how far you can get in terms of making an LLM compose something compelling because writing is a complex, adaptive process and these systems operate on a flattened representation of that.

My next point is that LLMs struggle to generate meaningful writing because they lack context. They simply can’t take in (or process) the staggering amount of information that’s involved in the act of a person having an opinion.

I recognize these models have proven that they have PhD-level intelligence in some areas. They can win math Olympiads. They’re trained on most of recorded history. There’s no question that their form of alien cognition is impressive, but it’s just not equivalent to the neural network of my brain or yours when it comes to writing.

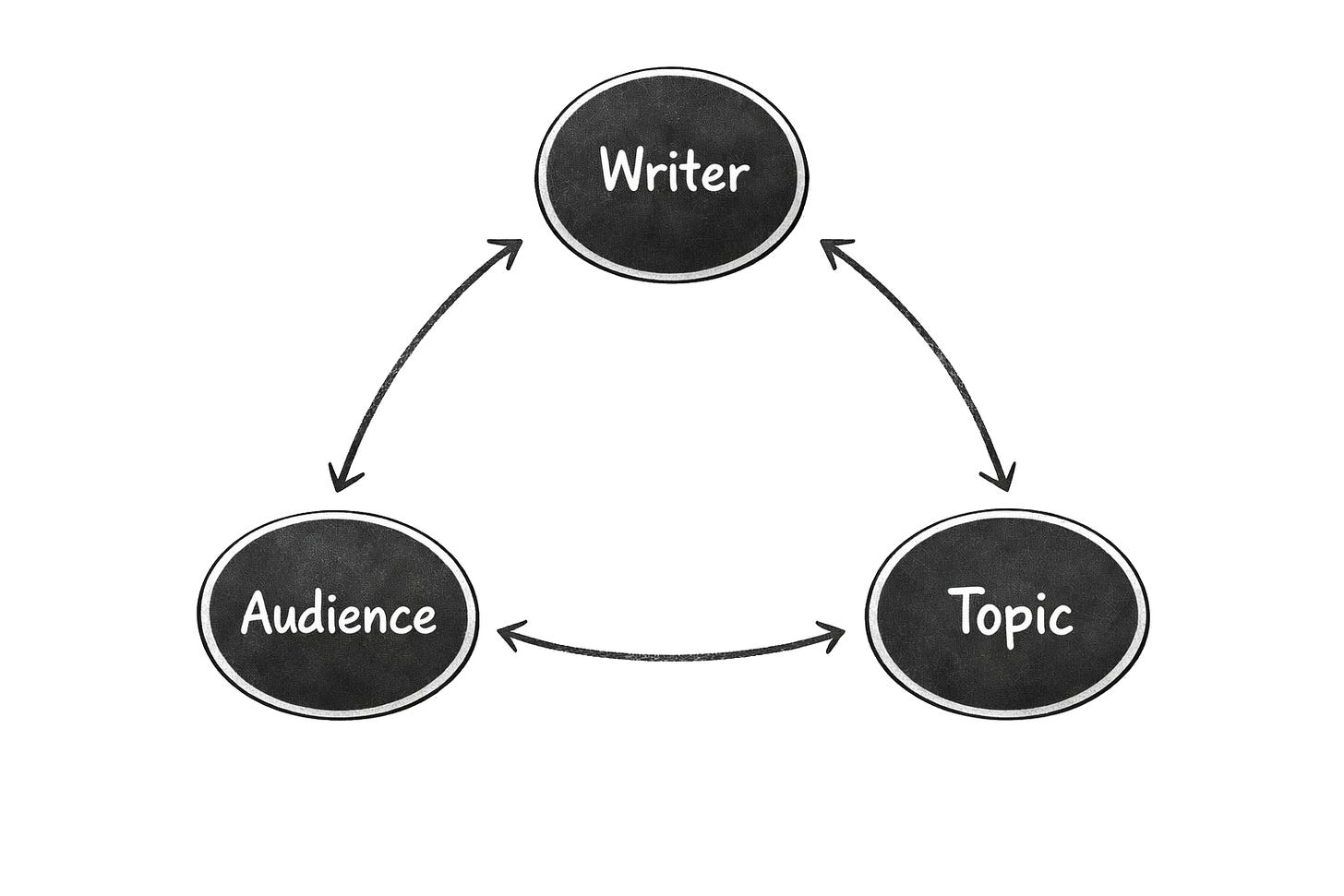

Let me use a graphic to explain this view more clearly.

If I write an essay, there are three variables in that process: myself as the writer, my audience, and the essay topic. Also, each of these variables is constantly changing.

A person is dynamic. They’re always in motion. Everything from how I’m feeling and what I’m currently reading to random mood swings and mental biases shape how I write something.

An audience is dynamic in the sense that their relationship to reading is evolving. Attention spans change, content formats rise and fall in popularity, again everything is in motion. For example, people who are familiar with LLMs have noticed that they tend to produce a lot of em dashes in their outputs, which in turn makes writers wary of using them – they don’t want their work to be discounted as “just” generated by AI.

A topic is also dynamic to varying degrees depending on what we’re talking about. Some subjects like ancient history are more static, but generally the world is a fluid place.

To dial this up further, the relationships between these variables are changing as well! A writer shapes the expectations of their audience through what they write over time, and in turn a writer’s audience informs what that writer writes about when they show interest in one subject over another. Both the writer and their audience also have evolving views of a topic as time moves forward and new information emerges.

To tie this together: there’s a lot of social complexity that goes into writing an essay, and an LLM has limited visibility into most of that complexity, which limits its ability to write with the flair of a human or on behalf of one.

Further, this idea that LLMs lack context is more than a raw data problem, it’s a relationship problem. Human writers have a qualitatively different relationship to this information than LLMs do. I don’t have data about myself… I am me. I have an intuition about my audience. When I write about a topic, I don’t know about it, I care about it.

LLMs don’t operate on the same plane. They have a view, but not my view. They have some context, and maybe often too much context, but not the context that’s relevant to play this game well. And since an essay really is just a snapshot in time of a person’s brain as a function of all these variables this makes human writing a lot more defensible than some people suggest.

After chatting about these ideas with friends, I’ve heard a couple good counterarguments to my issues with LLMs and writing. The most convincing of these is that everything will be solved when AI systems get access to infinite (or drastically larger) context windows.

This line of thinking says that if LLMs can offer users, say, 500 million tokens as a context window, then our machine companions will be able to reference everything about us: all our past pictures, writing, social media history, health data, recorded memories through things like wearable glasses, and so on. Then they’ll synthesize all of this data to approximate our decision-making process, which in turn would let them write (and think) on our behalf.

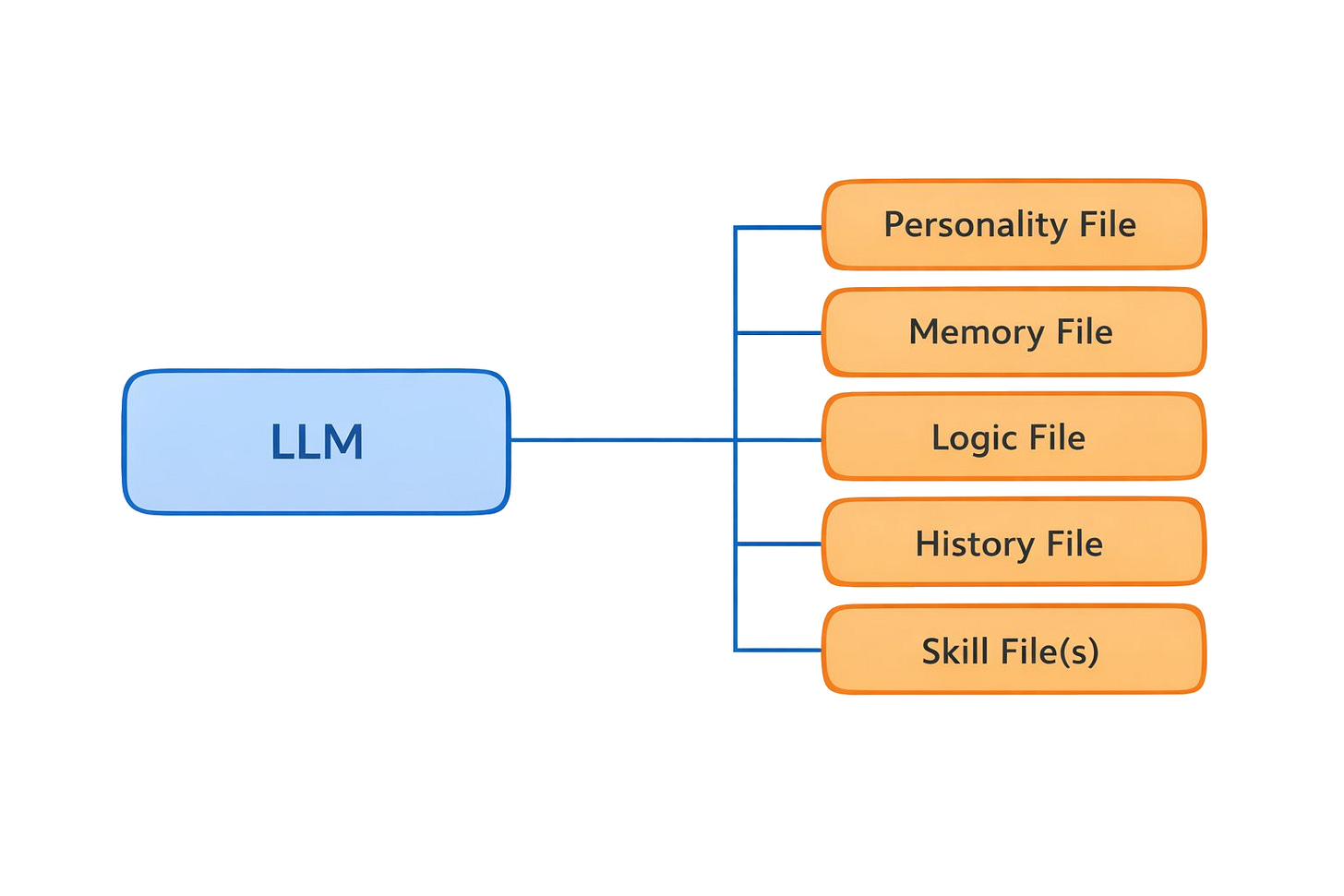

I do think there’s some merit to this. What’s happening right now with AI agents that initiate their sessions with access to memory files, conversation history, active skills, and other reference data is definitely gesturing at this future. See below for my basic bitch graphic.

I will say that I think this stuff is extremely cool, and I do think we’re going to get closer to modeling human consciousness through the combination of agent architectures and larger context windows. But no matter how weirdly fascinating it is to conjure up an agent with specific characteristics, I remain unconvinced that these systems will be able to write on my behalf.

I have two disagreements with this supersized context window argument, both of which are more philosophical than technical.

For one, even if you get infinite context windows, LLMs have an issue with hierarchy of information. There’s no clarity around the weight of some information over other information and how that changes in different contexts. So, Claude might have access to my references, but it doesn’t know when and how to use them. It might know grammar and rules of composition, but it doesn’t know when I might paraphrase something versus quote it outright. And there are far too many exceptions to any rule to ever codify someone’s intuition.

Next, I just find the comparison between an LLM and a human mind misleading. We’ve talked about this a bit already. It’s a case where reasoning by analogy leads to the wrong conclusion because LLMs don’t behave the way human brains behave.

I do think LLMs are useful machines. I’ve used ~20,000 words of my writing as a reference point, written up rules around how an agent should make editorial decisions on my behalf, then with that context I’ve run ideas against the best models that exist today. It’s created some fun outputs. But in every case, something feels off.

In music terms, I think LLMs are like a cover band that’s trying to perform a song where they get aspects of it “correct,” but they’re never able to embody what makes the song special because they lack the essence that is embedded in the original musicians. I might have a great time listening to the music, but I’m not paying $400 for a ticket to see PISS live.\

The AI maximalists will double down and make the case that, again, extra context is all you need to solve these issues, so one day an LLM will simply absorb mass amounts of your data and voila. Apparently, you can model everything about a human.

I disagree.

I’m not convinced we humans are even so legible to ourselves that we could articulate all the relevant context that an AI would require to mimic us.

My favorite example of this is the Greek concept of apophenia, which is the idea that humans perceive connections or patterns in random data. We don’t know why this happens, but this feature of our operating system is why we see different shapes in clouds, where, for example, you might see Bart Simpson and I see Pikachu. That creative expression is unique to each of us. How do you model that?

It’s a bit ironic, but our irrationality might be the most defensible asset we have as far as creative endeavours go. That bug is our best feature in a world of rapidly advancing AI systems because it makes us impossible to model.

Adam Mastroianni put this well in a blog post last year where he said, “Lots of people worry that AI will replace human writers. But I know something the computer doesn’t know, which is what it feels like inside my head. There is no text, no .jpg, no .csv that contains this information, because it is ineffable. My job is to carve off a sliver of the ineffable, and to eff it.”

Okay, this has been long. There are other counterarguments to what I’ve laid out here. I just want address one more.

Some folks bring up coding and point out that it’s a complex, creative activity like writing, and the follow up question is: if LLMs have changed how coding is done to the point where it’s “solved” why can’t writing be solved as well?

I’m no authority on coding, but I do see that LLMs have changed that activity. My pushback there is that coding and writing ultimately have a different telos.

The way you evaluate writing is whether it resonated with an audience or not. It’s about curating an entertainment experience for another human. Coding is evaluated by correctness. It’s functional where writing is experiential and concrete where writing is fluffy.

There are for sure multiple ways to code a piece of software to accomplish a task, but there are more standards to follow than there are in writing, plus, as much as engineers care about the readability of code, the ultimate audience for it is a compiler not a human.

I think LLMs work well in coding because it’s a coherent system: an agent can create code, run tests, iterate, then verify whether it will execute or not. But with writing, that doesn’t apply because it’s not a computational exercise, it’s an interpretive one. As a result, LLM code is mostly coherent these days, and when it’s not it can be concretely edited. LLM writing is way less coherent.

An analogy that I like to think about for LLM writing outputs is they’re similar to the bar scene from Good Will Hunting where there’s a dweebie Harvard student who presents what appears to be a fancy argument about US history, but it turns out he’s borrowing someone else’s thinking, and Will goes, “Were you going to plagiarize the whole thing for us? Do you have any thoughts of your own on this matter?”

That’s what it’s like talking to ChatGPT. It’s more like the illusion of intelligence than actual intelligence.

As I wind this down, I want to say that I’m optimistic about AI. I see LLMs as a complement, not a substitute to our intelligence. They’re super useful tools, and I use them in my writing today for ideation and sometimes for editorial feedback.

That said, even in a world where we see significant improvements in AI systems, I find it unconvincing that they will replace human writers. There’s a special je ne sais quoi about being human and the way we hallucinate our own custom models of the world based on a lifetime of experiences that I don’t think any machine will replicate.

Now, could I be wrong? Possibly. Will GPT 33 be able to write perfect essays? We’ll see. Models have surprised me and I’m sure they will continue to.

But my view is good writing requires something that only humans have, which is the ability to struggle and to speak about that struggle from the point of view of being in the trenches of life. It is sense-making from one human to another, and in my mind as long as humans endure as a species, there will be an audience for that.

Until then, keep writing.

A massive thank you to Devin, Finn, Nuk, and Luffistotle for feedback and review.

We will be featuring good writing and writers we love from time to time. If you have recommendations, send them our way.

Token Dispatch is a daily crypto newsletter handpicked and crafted with love by human bots. If you want to reach out to 200,000+ subscriber community of the Token Dispatch, you can explore the partnership opportunities with us 🙌

📩 Fill out this form to submit your details and book a meeting with us directly.

Disclaimer: This newsletter contains analysis and opinions of the author. Content is for informational purposes only, not financial advice. Trading crypto involves substantial risk - your capital is at risk. Do your own research.