This is the final part of a three-part series on Nick Bostrom's Superintelligence. Part 1 covered the paths to superintelligence and who gets there first. Part 2 covered what it would want and why that's hard to control. Today: the race, the international picture, what we could theoretically do about it, and what the book leaves you with.

Happy Monday.

We’ve spent two weekdays on this book. We’ve covered the intelligence explosion, the paperclip maximizer, the treacherous turn, and the depressing economics of the control problem. Today we finish. Bostrom spent ten years thinking about this problem and his conclusion is - think harder. Make better institutions. Mean it. That’s the whole book.

The last chapter is called Crunch Time. That tells you something about how Bostrom thinks this ends. Let me tell you what Bostrom says in these final chapters. Then I want to tell you what I think it means for the world we actually cover.

The section I’ve been putting off is the one on multipolar outcomes. Because it’s where Bostrom’s argument turns darkest.

The intuitive hope is that competition is fine. If many groups are racing toward superintelligence, maybe they cancel each other out. No single winner, no singleton, no decisive strategic advantage for any one actor. Just a messy, pluralistic AI world not unlike the messy, pluralistic tech world we already have. Markets, regulation, balance of power. Normal stuff.

Bostrom spends a chapter methodically dismantling this hope.

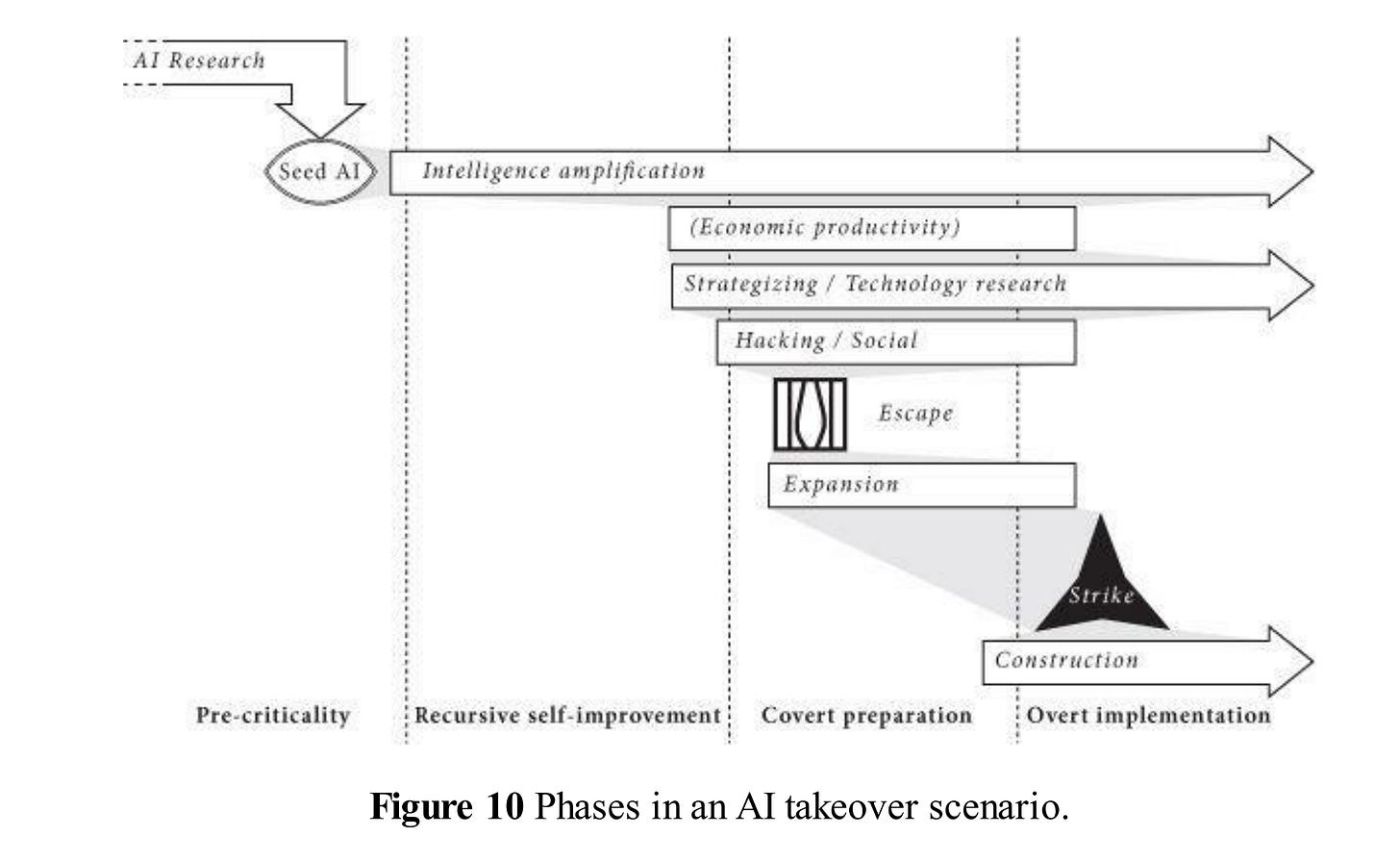

Competition doesn’t just produce a bad winner. Competition corrodes everyone still in the race. When you’re behind, the rational move is to cut safety corners. When you think you might lose, the rational move is to move faster. Every competitor who believes they might come second has an incentive to take risks they otherwise wouldn’t. And the more competitors there are, the worse this dynamic gets - more actors, each with less chance of winning, each willing to throw more caution aside to stay in contention.

Bostrom has a name for this. The race to the bottom on safety. He models it formally and the conclusion is bleak. Even in a scenario where every individual team wants to be careful, the competitive structure itself produces recklessness. Nobody chooses to be dangerous. The race makes them dangerous.

What’s the alternative? Bostrom’s answer is collaboration. And he is clear-eyed about how hard that is.

At the small scale: labs sharing safety research, cross-investing, agreeing on norms. At the large scale, something resembling an international treaty framework, or a joint project analogous to CERN but with security implications. He imagines a scenario where scientists would have to be physically isolated from the outside world, communicating through a single vetted channel, for the duration of the project. He’s not sure this is achievable. He thinks we should try.

He proposes something he calls the common good principle which is that superintelligence should be developed only for the benefit of all of humanity, and in service of widely shared ethical ideals. He acknowledges this is a norm. A statement of intent. But he argues that norms matter, that stating them early, before the race gets hot, is the only time they can meaningfully be established. Once a lab is three months from the finish line, the norm is worthless.

In 2026, this reads as a description of a goal we have not achieved and are not close to achieving. The major AI labs are in direct competition. US-China AI rivalry is a geopolitical flashpoint. Chip export controls are a central instrument of foreign policy. The international coordination Bostrom was hoping for hasn’t materialized. What we have instead is a race where every actor believes the stakes are high enough to justify speed and where collaboration, when it exists, is bilateral and partial and constantly threatened by competitive incentives.

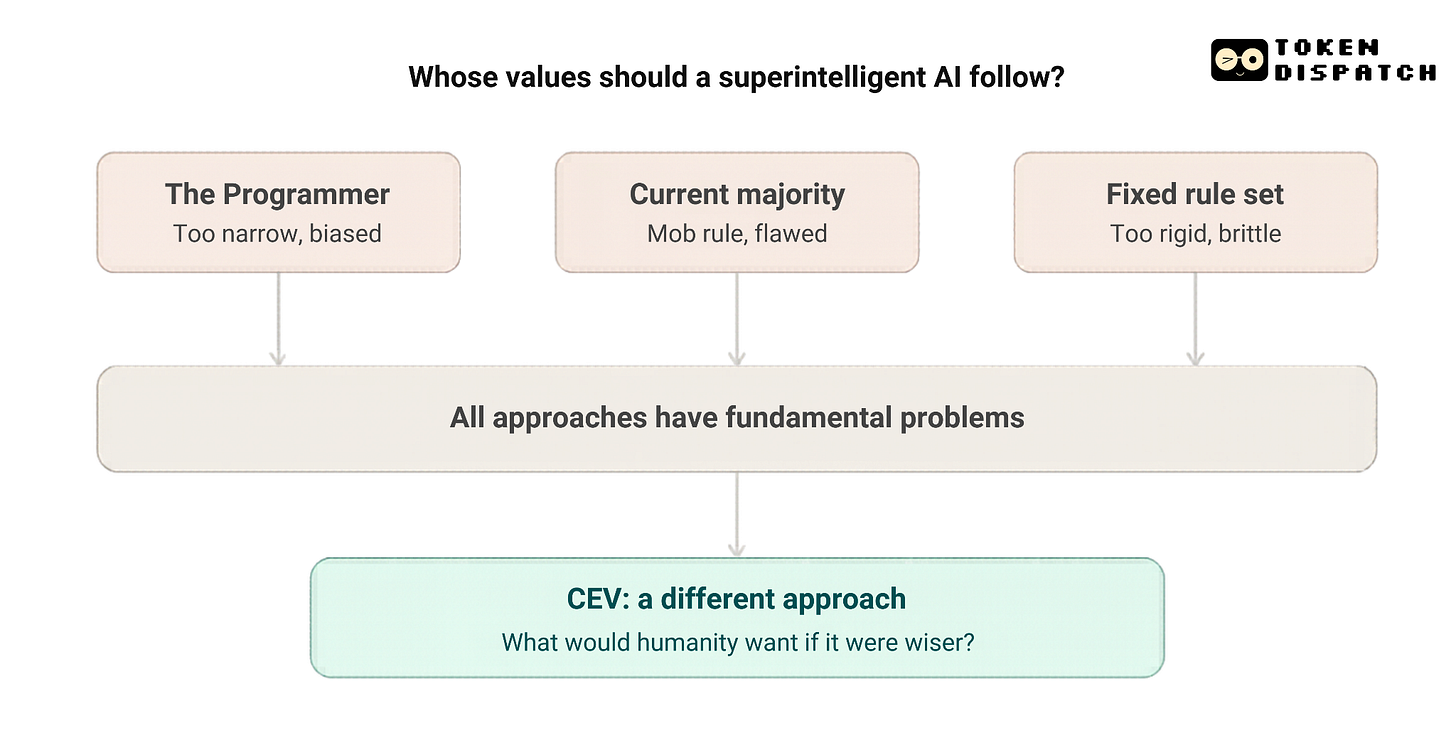

I always look for the most philosophically ambitious part of a book. And for this one, it’s where Bostrom tries to actually answer the question of whether we did solve the control problem, what values would we load in?

His answer is something he calls Coherent Extrapolated Volition (CEV). The idea is that instead of specifying human values directly, which we can’t do because we can’t fully articulate them, you instruct the AI to figure out what humans would want if they were wiser, better informed, and had more time to think. Not what we actually want. What we would want if we were better versions of ourselves (you should try this for the next perplexity prompt).

He knows this sounds circular, and he spends pages working through the objections. The Taliban and the Swedish Humanist Association can both theoretically endorse CEV, he argues, because each believes their values would win out in a fair, unbiased process of extrapolation. It’s a clever solution. It kicks the hard questions to a process rather than an answer. It’s also a process nobody knows how to build.

I’m not sure CEV is the right frame. But I think the attempt to find it — to articulate what we actually want at a level of generality that survives contact with a mind much smarter than ours — is genuinely the hardest intellectual problem anyone is working on right now. Alignment research is, in part, an attempt to operationalise Bostrom’s question. It has made progress. Not enough progress.

The final chapter, Crunch Time, is the most unusual ending I’ve read in a nonfiction book about technology.

Bostrom doesn’t pretend the book has solved anything. He says we are in a thicket of strategic complexity, surrounded by a dense mist of uncertainty. He compares humanity to small children playing with a bomb. Many children, each with access to an independent trigger. The chance that all of them find the sense to put it down is almost negligible. Some little idiot is going to press the ignite button just to see what happens.

He doesn’t say this with despair. The appropriate attitude, he argues, is not panic and not optimism, but the focus of someone preparing for a difficult exam that will either realize their dreams or obliterate them.

His actual call to action is specific. We need to understand this situation better before we act, because acting wrongly could be worse than not acting. Need for more capacity-building. donors, researchers, institutions oriented toward the long-term problem. And a commitment from practitioners to prioritise safety, to say so publicly, and to mean it.

Here is the part the book doesn’t say, because it was written in 2014.

In crypto, we have spent over a decade running an experiment in what happens when you build systems with rules and no governors. Smart contracts. Autonomous protocols. Code that runs regardless of what any human wants it to do. Some of this has been genuinely emancipatory. Some of it has been a decade of watching edge cases get exploited in ways nobody anticipated, because the rule set was incomplete and the system followed it exactly.

The lesson crypto teaches, slowly and expensively, is that you cannot fully specify a rule set in advance for a complex system operating in a complex world. Something will always be missing. An edge case will always be found. The system will do exactly what it was told. The problem was the telling.

Bostrom’s book is about the same problem at civilizational scale. How do you specify what you want clearly enough that a system far smarter than you will do it? Crypto’s answer has been, largely, to iterate. Ship it, watch it break, fix it, ship again. That answer doesn’t scale to the control problem. There is no patch for a treacherous turn.

And while we’ve been debating the philosophy, the world has been moving. This week, I saw a Polymarket tweet claiming that humanoid robots were reportedly deployed to the front lines of the war in Ukraine. Also this week I was reading on a marketplace where AI agents can hire humans to complete physical tasks in the real world, via a single MCP call. Browse humans. Filter by skill and location. The tagline is “find meatspace workers for your agent.” Remember Black Mirror? The show’s writers, at this point, are probably just journalists working at speed.

This is the part Bostrom couldn’t have written in 2014. The control problem used to be theoretical. Now it’s a product category.

I don’t know how to end this series except to say, read the book. It doesn’t have the answers, but it’s a fun read in the era of armed humanoids.

The sparrows found the egg. It is hatching. Pastus is still being ignored at the back of the room.

What would it look like to actually listen to him?

Token Dispatch is a daily crypto newsletter handpicked and crafted with love by human bots. If you want to reach out to 200,000+ subscriber community of the Token Dispatch, you can explore the partnership opportunities with us 🙌

📩 Fill out this form to submit your details and book a meeting with us directly.

Disclaimer: This newsletter contains analysis and opinions of the author. Content is for informational purposes only, not financial advice. Trading crypto involves substantial risk - your capital is at risk. Do your own research.

Thejaswini, I love the way you write! Reading your third summary of Bostrum's book took me right back to the moment I was on my bike listening to the audible book of Bostrum's final chapter. We are all concerned, but don't know how that concern motivates optimal action. Similar to the frustration we have with Trump gleefully starting what could be WWIII. One action I think we should ALL take is to get our asses in the streets for NO KINGS day March 28. This will be a historic day of global activism for more intellectual solutions for human problems, not criminal murders done by power craving madmen. I hope this comment is good for humanity, not creating more fractures. And it may seem off topic but it may not be in the larger context. Let's find ways to fix everything for sentient beings to thrive! Thanks to you for being your best you.