Hello,

The promise that AI agents will transform the internet landscape is gradually becoming a reality. They have evolved beyond experimental tools inside chat windows and have become an integral part of our day-to-day operations, from clearing inboxes, scheduling meetings, to responding to support tickets, all while quietly improving productivity in ways that often go unnoticed.

However, this growth is not just anecdotal.

By 2025, automated traffic surpassed human traffic on the web, accounting for 51% of total activity. AI-driven traffic to U.S. retail sites alone grew by 4,700% year over year. AI agents are now operating across internal systems, with many able to access data, trigger workflows, and even initiate transactions.

Yet confidence in fully autonomous agents has fallen from 43% to 22% within a year, largely due to rising security incidents. Nearly half of enterprises still authenticate agents using shared API keys, a method never designed for autonomous systems to move value or act independently.

The problem here is that agents are scaling faster than the infrastructure meant to govern them.

In response, entirely new protocol stacks are emerging. Stablecoins, card network integrations, and agent-native standards like x402 are enabling machine-initiated transactions. At the same time, new identity and verification layers are being developed to help agents themselves and operate within a structured environment.

But enabling payments is not the same as enabling an economy. Because once agents can move value, more fundamental questions arise:. How do they discover the right services in a machine-readable way? How do they prove identity and authorisation? How do we verify that what they claim to have executed actually happened?

In this piece, I will explore the infrastructure needed for an agent-driven economy to function at scale and assess whether these layers are mature enough to support persistent, autonomous participants operating at machine speed.

Agents Cannot Buy What They Cannot See

Before an agent can pay for a service, it has to find it. That may sound trivial, but this is where most of the friction currently resides.

The web was built for people to read pages. When a human searches for something, search engines return ranked links. These pages are optimised for persuasion. They are filled with layouts, trackers, ads, navigation bars, and stylistic elements that make sense to a person but are largely noise to a machine.

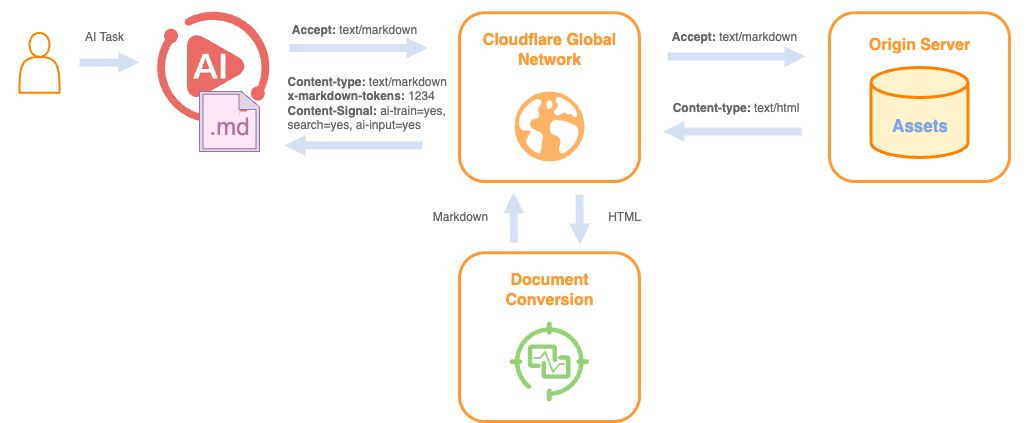

When an agent requests the same page, it receives raw HTML. A typical blog post or product page may require roughly 16,000 tokens in that form. When converted into a clean Markdown file, the token count drops to about 3,000. That is an 80% reduction in what the model has to process. For a single request, this difference may not matter much. But when an agent makes thousands of such requests across multiple services, the excess processing compounds into latency, costs, and greater reasoning complexity.

Agents end up spending significant computational effort stripping away interface elements before they can access the core information required to act. This effort does not improve the output. It simply compensates for a web that was never designed for them.

This inefficiency becomes more visible as agent-driven traffic grows. AI-driven crawling of retail and software websites has increased sharply over the past year and now represents a majority of total web activity. At the same time, around 79% of all major news and content sites block at least one AI crawler. From their perspective, the reaction is understandable. Agents extract content without interacting with ads, subscriptions, or traditional conversion funnels. Blocking them protects revenue.

The problem is that the web does not have a reliable way to distinguish between a malicious scraper and a legitimate purchasing agent. Both appear as automatic traffic. Both originate from cloud infrastructure. To the system, they look identical.

The deeper issue is that agents are not trying to consume pages. They are trying to discover possibilities for action.

When a human searches for “book a flight under $500,” a ranked list of links is sufficient. The person can compare options and decide. When an agent receives the same instruction, it needs something entirely different. It needs to know which services accept booking requests, what input format is required, how pricing is calculated, and whether payment can be settled programmatically. Very few services expose that information clearly.

This is why the conversation is shifting from search engine optimisation to agent-oriented discoverability, often referred to as AEO. Ranking on a search page becomes less relevant if the end user is an agent. What matters is whether a service can describe its capabilities in a way that an agent can interpret without guesswork. If it can’t, it risks becoming invisible to a growing share of economic activity.

Agents Need Identity

Once agents can discover services and initiate transactions, the next major problem is for the system on the other end to know who it is dealing with. In other words: Identity.

Financial systems today already operate with far more machine identities than human ones. In finance, non-human identities outnumber humans by roughly 96 to 1. APIs, service accounts, automation scripts, and internal agents dominate institutional infrastructure. Most of them were never designed to hold discretionary authority over capital. They execute predefined instructions. They can’t negotiate, choose vendors, or initiate payment across open networks.

Autonomous agents change that boundary. If an agent can move stablecoins directly or trigger checkout flows without manual confirmation, the central question shifts from “Can it pay?” to “Who authorised it to pay?”

This is where identity becomes foundational, and the idea of “Know Your Agent” takes shape.

Just as financial institutions verify customers before allowing them to transact, services interacting with autonomous agents must verify three things before granting access to capital or sensitive operations:

Cryptographic authenticity: Is this agent probably in control of the key it claims to use?

Delegated authority: Who granted this agent permission, and what are its limits?

Real-world linkage: Is the agent tied to a legally accountable entity?

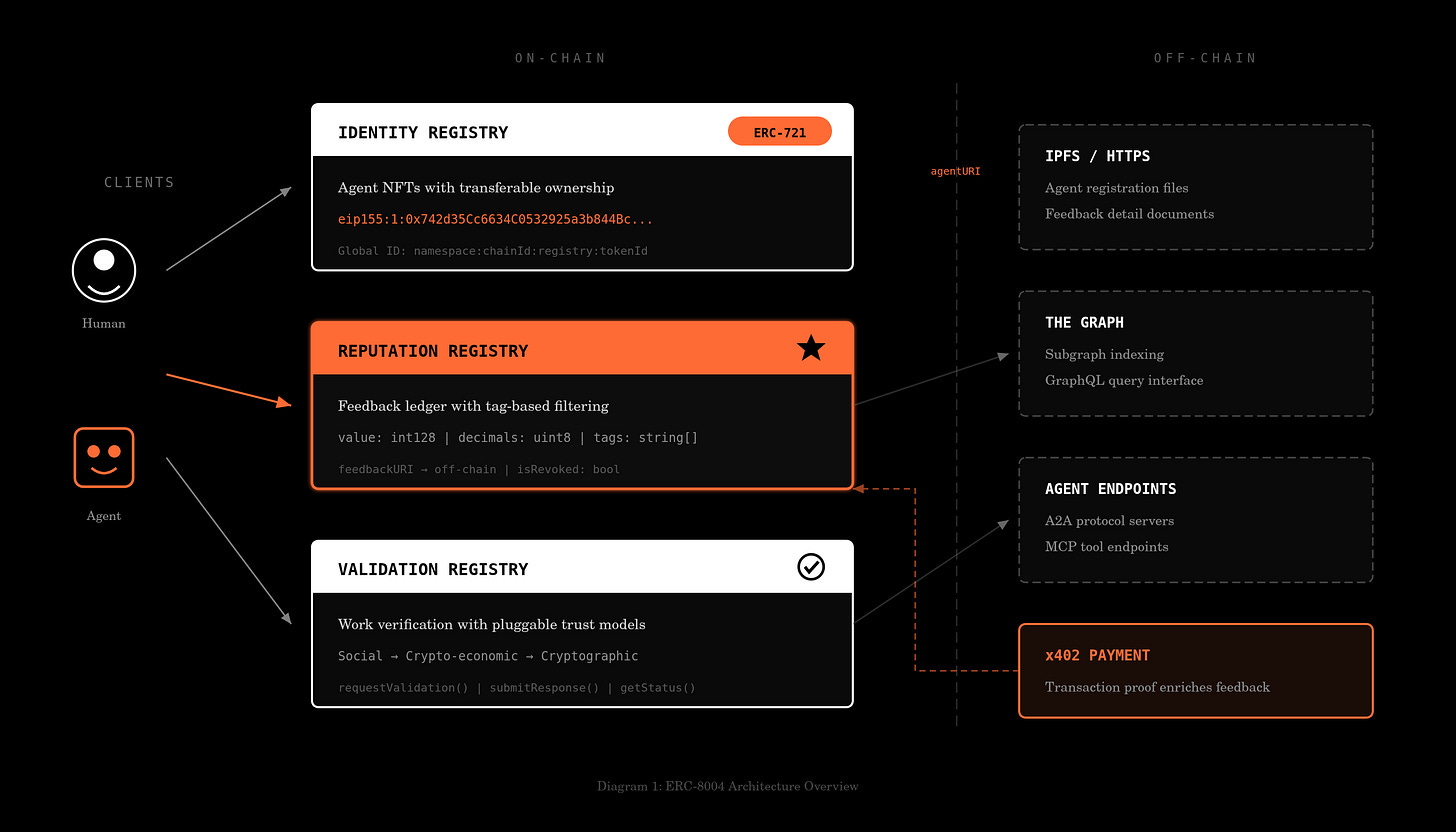

Together, these checks form an identity stack. At the base sits cryptographic key generation and signing. Standards such as ERC-8004 attempt to formalise how agents can anchor identities on-chain in a verifiable registry. A signed request proves control of a key but nothing more.

Above that sits an identity provider layer. This connects the key to a real-world entity, such as a registered company, financial institution, or verified individual. Without this binding, a signature proves control but not accountability.

At the edge sits verification infrastructure. Payment processors, CDNs, or application servers validate signatures, check associated credentials, and enforce permission boundaries in real time. Visa’s Trusted Agent Protocol is an example of permissioned commerce, which enables merchants to verify that an agent is authorised to transact on behalf of a specific user. Stripe’s Agentic Commerce Protocol (ACP) is pushing similar checks into programmable checkout and stablecoin flows. It defines exactly how to structure a cart, generate a payment, and complete a checkout.

Alongside this, the Universal Commerce Protocol (UCP), led by Google and Shopify, allows merchants to publish “capability manifests” that agents can discover and negotiate with. It acts as an orchestration layer and is expected to integrate into Google search and Gemini.

The important nuance is that permissionless and permissioned systems will coexist.

On public blockchains, agents can transact without centralised gatekeepers. That increases speed and composability, but it also intensifies compliance pressure. Stripe’s acquisition of Bridge highlighted this tension. Stablecoins enable cross-border transfers instantly, but compliance obligations do not disappear simply because settlement occurs on-chain.

This tension inevitably pulls regulators into the picture. Once autonomous agents can initiate financial transactions and interact with markets without direct human oversight, questions of accountability become unavoidable. Financial systems cannot allow capital to move through unidentified or unauthorised actors, even if those actors are pieces of software.

Regulatory frameworks are already being adopted. The Colorado AI Act, effective from February 1, 2026, introduces accountability requirements for high-risk automated systems, and similar legislation is advancing globally. As agents begin executing financial decisions at scale, identity cannot remain optional. If discovery makes agents visible, identity is what makes them admissible.

Verifying Agent’s Execution & Reputation

Once an agent begins executing tasks that involve money, contracts, or sensitive information, just having an identity might not be enough. A verified agent can still hallucinate, misrepresent its work, leak information, or simply perform poorly.

The most important question, then, is whether it can be proved that the agent did what it claimed.

If an agent states that it analysed 1,000 documents, detected fraud patterns, or executed a trading strategy, there must be a way to verify that this computation actually occurred and that the output was not fabricated or corrupted. For this, we need a performance layer that does exactly that.

There are three approaches to achieve this:

TEEs (Trusted Execution Environments): The first approach relies on hardware-based attestation through Trusted Execution Environments (TEEs) such as AWS Nitro and Intel SGX. In this model, the agent runs within a secure enclave that issues a cryptographic certificate confirming that a specific code was executed on specific data without tampering. The overhead is typically modest, around 5-10% additional latency, which may be acceptable for financial and enterprise-grade use cases where integrity matters more than raw speed.

ZKML (Zero-Knowledge Machine Learning): The second approach is mathematical. ZKML enables an agent to generate cryptographic proof that an output was produced by a specific model, without revealing model weights or private inputs. Lagrange Labs’ DeepProve-1 recently demonstrated a full zero-knowledge proof of GPT-2 inference, achieving speeds 54-158 times faster than prior methods.

Restake Security: The third model enforces correctness economically rather than computationally. Protocols such as EigenLayer introduce staking-based security, in which validators stake capital behind the agent’s outputs. If an output is challenged and proven false, the stake can be slashed. Instead of proving every computation, the system makes dishonest actions financially irrational.

Each of these mechanisms addresses the same problem from a different angle. However, execution proofs are episodic. They verify a single task, but markets require something cumulative. This is where reputation becomes essential.

Reputation transforms isolated proofs into a longitudinal performance history. Instead of relying on platform-specific reviews or opaque internal dashboards, emerging systems aim to make agent performance portable and cryptographically anchored.

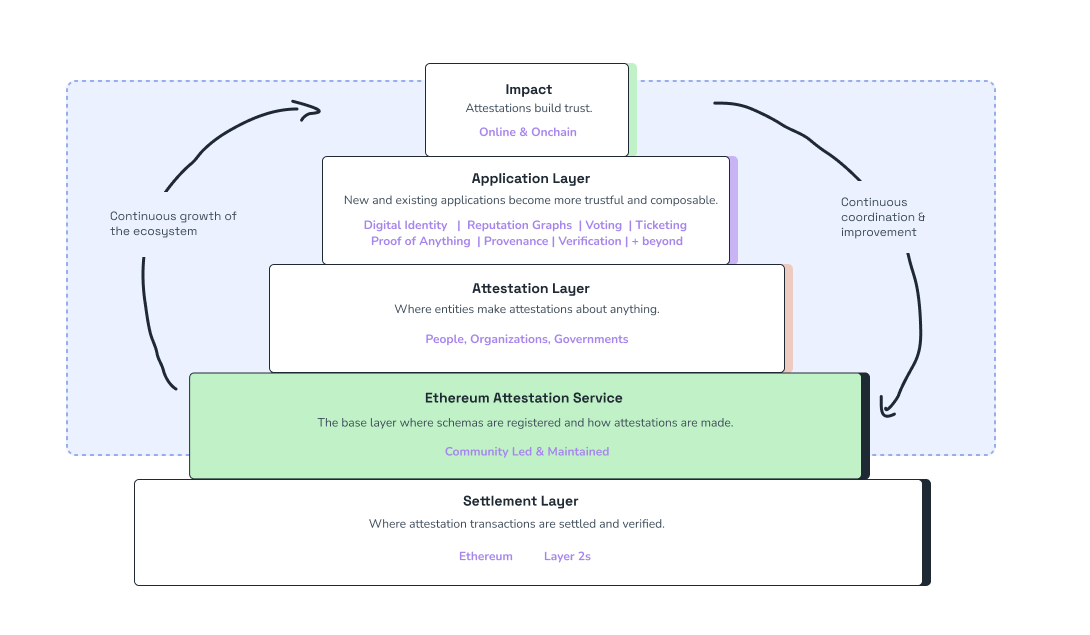

The Ethereum Attestation Service (EAS) allows users or services to issue signed, on-chain attestations regarding an agent’s behaviour. A successful task completion, an accurate forecast, or a compliant transaction can be recorded in a tamper-resistant way that travels with the agent across applications. These attestations are not marketing claims, but they are signed records.

Competitive benchmarking environments are also forming. Agent arenas evaluate agents against standardised tasks and rank them using a scoring system such as Elo. Recall Network reports over 110,000 participants generating 5.88 million forecasts, creating measurable performance data rather than anecdotal reputation. We explored how these prediction arenas work in more detail in a previous piece on the PageRank problem for agents. As these systems scale, they begin to resemble real rating markets for AI agents.

This makes reputation portable across platforms.

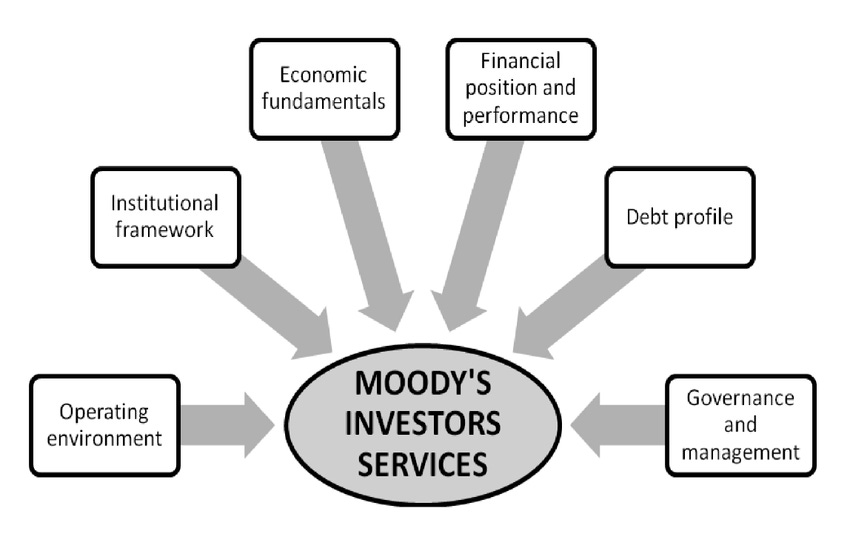

In traditional finance, institutions like Moody’s rate bonds to signal creditworthiness. An agent economy will require an equivalent layer that rates non-human actors. Markets will need to assess whether an agent is reliable enough to delegate capital to, whether its outputs are statistically consistent, and whether its behaviour remains stable over time.

As agents begin operating with real authority, markets will need a clear way to measure their reliability. Instead of scattered platform reviews, agents will carry portable performance records built on verified execution and benchmarking, with scores that adjust if quality drops and permissions that are traceable to explicit authorisation. Insurers, merchants, and compliance systems will rely on this data to decide which agents can access capital, data, or regulated workflows.

Taken together, these layers begin to form the basic infrastructure of an agent economy. Agents must first be able to discover services in a machine-readable way. They must then prove who they are and who authorised them to act. And finally, they must build a verifiable track record that proves they can be trusted to execute. Without discoverability, agents cannot find opportunities. Without identity, they cannot be admitted into systems. And without reputation, they cannot earn sustained economic trust.

That’s it for today. See you next week.

Until then, stay curious!

Token Dispatch is a daily crypto newsletter handpicked and crafted with love by human bots. If you want to reach out to 200,000+ subscriber community of the Token Dispatch, you can explore the partnership opportunities with us 🙌

📩 Fill out this form to submit your details and book a meeting with us directly.

Disclaimer: This newsletter contains analysis and opinions of the author. Content is for informational purposes only, not financial advice. Trading crypto involves substantial risk - your capital is at risk. Do your own research.

The “know your agent” framing is exactly right, and the enterprise version of this problem is harder than it looks. It’s not just knowing what the agent can do — it’s knowing what it can access, what it can modify, and what it can trigger downstream. AWS just shipped IAM for MCP Servers specifically because enterprise deployments need the same identity and permissions controls for agents that they already have for human employees. The framework matters less than the harness. Memory, identity, tool permissions, audit trails — that’s where production agent deployments succeed or fail.

Great read and interesting take!

It's worth checking out what Ribbit Capital is building with $TIBBIR. @Altcoinist has some great articles on X.